regularization machine learning mastery

This is called weight regularization and it can be used as a general technique to reduce overfitting of the training dataset and improve the generalization of the model. This is an important theme in machine learning.

A Simple Explanation Of Regularization In Machine Learning Nintyzeros

Regularization in Machine Learning What is Regularization.

. Dropout is a simple and powerful regularization technique for neural networks and deep learning models. It is a technique to prevent the model from overfitting. The regularization penalty is commonly written as a function RW.

We can do this by simply inserting a new Dropout layer between the hidden layer and the output layer. Machine learning involves equipping computers to perform specific tasks without explicit instructions. The post Introduction to Regularization to Reduce Overfitting of Deep Learning Neural Networks appeared first on Machine Learning Mastery.

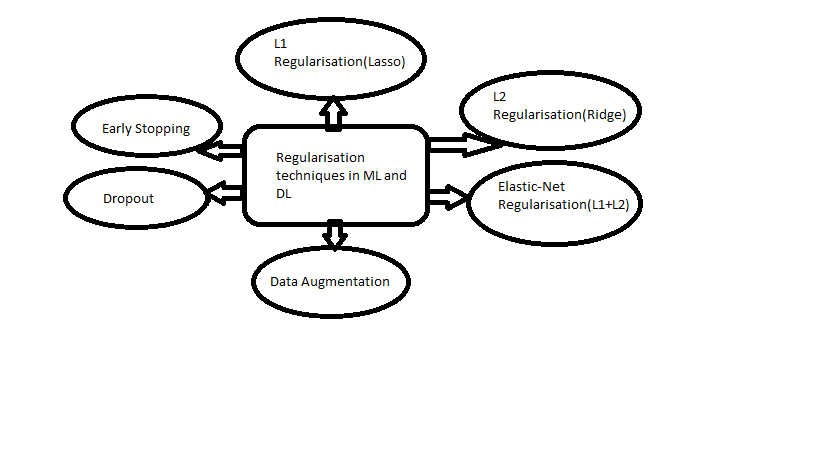

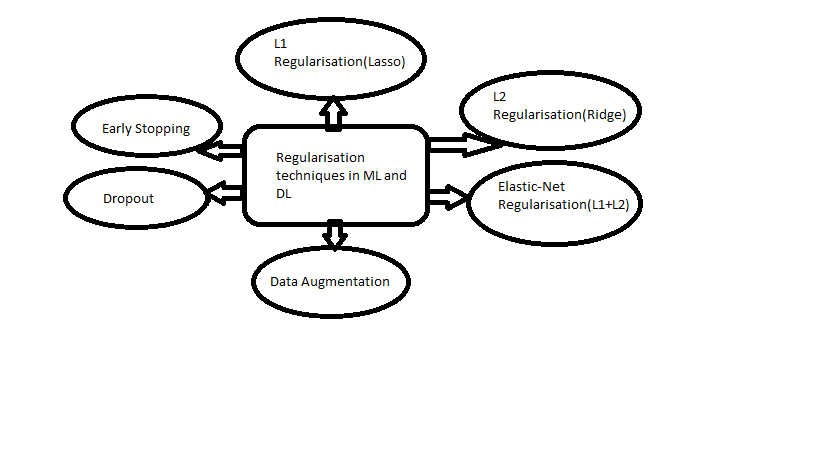

Regularization describes methods for calibrating machine learning models to reduce the adjusted loss function and avoid overfitting or underfitting. It is one of the most important concepts of machine learning. In this post you will discover the Dropout.

Regularization is one of the basic and most important concept in the world of Machine Learning. Regularization can be implemented in. This technique prevents the model from overfitting by adding extra information to it.

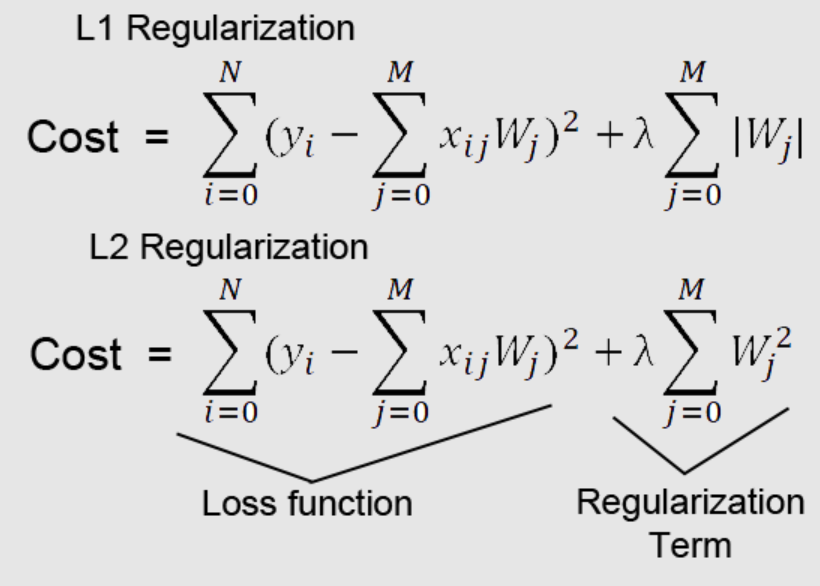

TextRegularization Loss Function Penalty There are three commonly. Part 1 deals with the theory. Regularization is one of the most important concepts of machine learning.

Last Updated on August 6 2022. We can update the example to use dropout regularization. To put it simply it is a technique to prevent the machine learning model from overfitting by taking preventive.

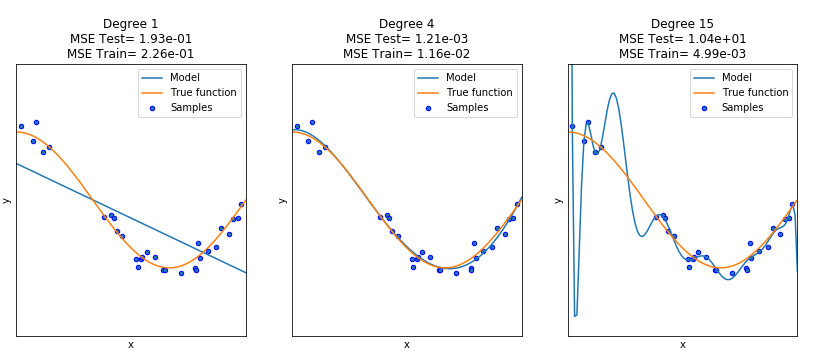

In Figure 4 the black line represents a model without Ridge regression applied and the red line represents a model with Ridge regression appliedNote how much smoother the red line is. Regularization is one of the techniques that is used to control overfitting in high flexibility models. We can properly fit our.

Regularization This is a form of. While regularization is used with many. Regularization is used in machine learning as a solution to overfitting by reducing the variance of the ML model under consideration.

The answer is to define a regularization penalty a function that operates on our weight matrix. Technically regularization avoids overfitting by adding a penalty to the models loss function. A regression model that uses L1 regularization technique is called Lasso Regression and model which uses L2 is called Ridge.

I have covered the entire concept in two parts. Regularization is amongst one of the most crucial concepts of machine learning. So the systems are programmed to learn and improve from experience.

It is a form of regression.

Regularization In Machine Learning Regularization Example Machine Learning Tutorial Simplilearn Youtube

What Are The Main Regularization Methods Used In Machine Learning Quora

Regularization Techniques For Image Processing Using Tensorflow By Odemakinde Elisha Heartbeat

Regularization Techniques Regularization In Deep Learning

Understanding Regularization In Machine Learning By Ashu Prasad Towards Data Science

Regularisation Techniques In Machine Learning And Deep Learning By Saurabh Singh Analytics Vidhya Medium

Linear Algebra For Machine Learning Sample Pdf Basics Of Linear Algebra For Machine Learning Discover The Mathematical Language Of Data In Course Hero

Regularization In Machine Learning Concepts Examples Data Analytics

A Gentle Introduction To Activation Regularization In Deep Learning

40 Must Read Ai Machine Learning Blogs

Peem Pumrapee P Peempumrapee Twitter

Regularization Techniques Regularization In Deep Learning

Fighting Overfitting With L1 Or L2 Regularization Which One Is Better Neptune Ai

How To Use Weight Decay To Reduce Overfitting Of Neural Network In Keras

Techniques For Handling Underfitting And Overfitting In Machine Learning By Manpreet Singh Minhas Towards Data Science

Regularization In Machine Learning Simplilearn

What Is Activation In Convolutional Neural Networks Quora

Use Weight Regularization To Reduce Overfitting Of Deep Learning Models

From Machine Learning To Reinforcement Learning Mastery By Shubham Kumar Better Programming